While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases. We're investing this and working on the next version. The model retains the non-masked contents of the image, but images look less sharp. starting in-painting from a fully masked image), the quality of the image is degraded.

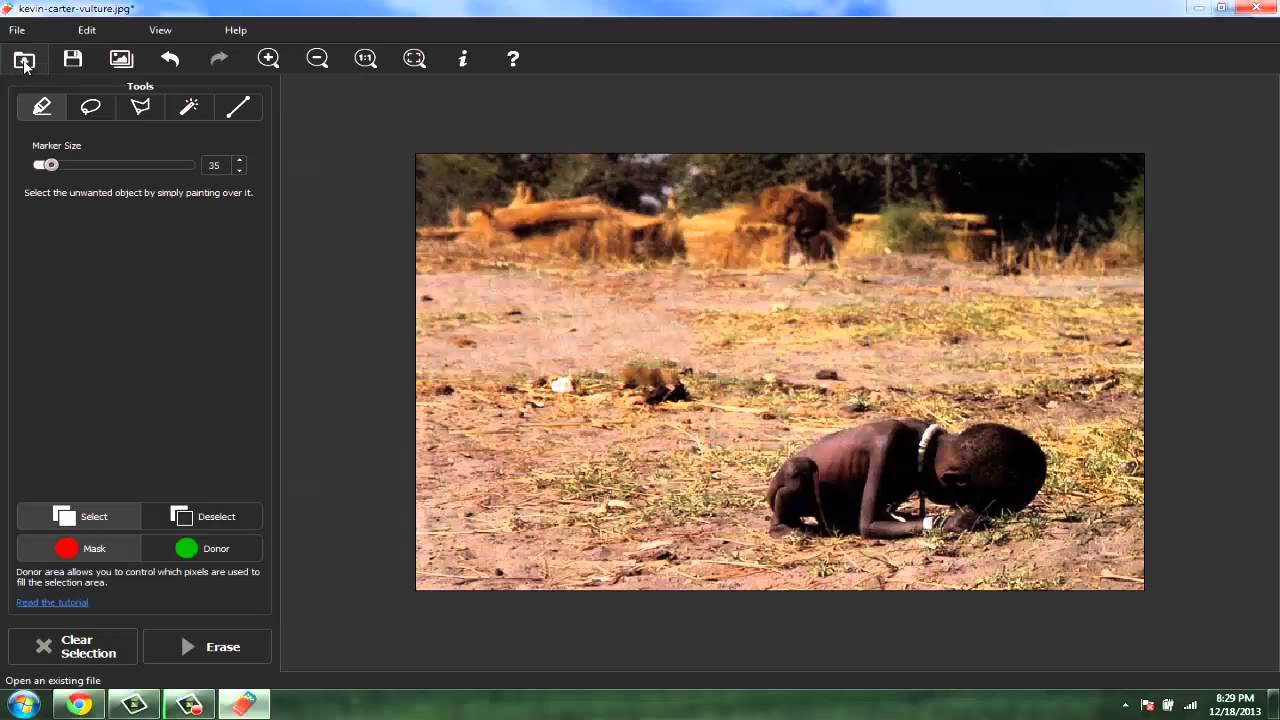

When the strength parameter is set to 1 (i.e.The autoencoding part of the model is lossy.Faces and people in general may not be generated properly.The model struggles with more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”.The model does not achieve perfect photorealism.The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model. Probing and understanding the limitations and biases of generative models.Safe deployment of models which have the potential to generate harmful content.Applications in educational or creative tools.Generation of artworks and use in design and other artistic processes.Possible research areas and tasks include The model is intended for research purposes only. It is a Latent Diffusion Model that uses two fixed, pretrained text encoders ( OpenCLIP-ViT/G and CLIP-ViT/L). Model Description: This is a model that can be used to generate and modify images based on text prompts.License: CreativeML Open RAIL++-M License.Model type: Diffusion-based text-to-image generative model.Strength= 0.99, # make sure to use `strength` below 1.0

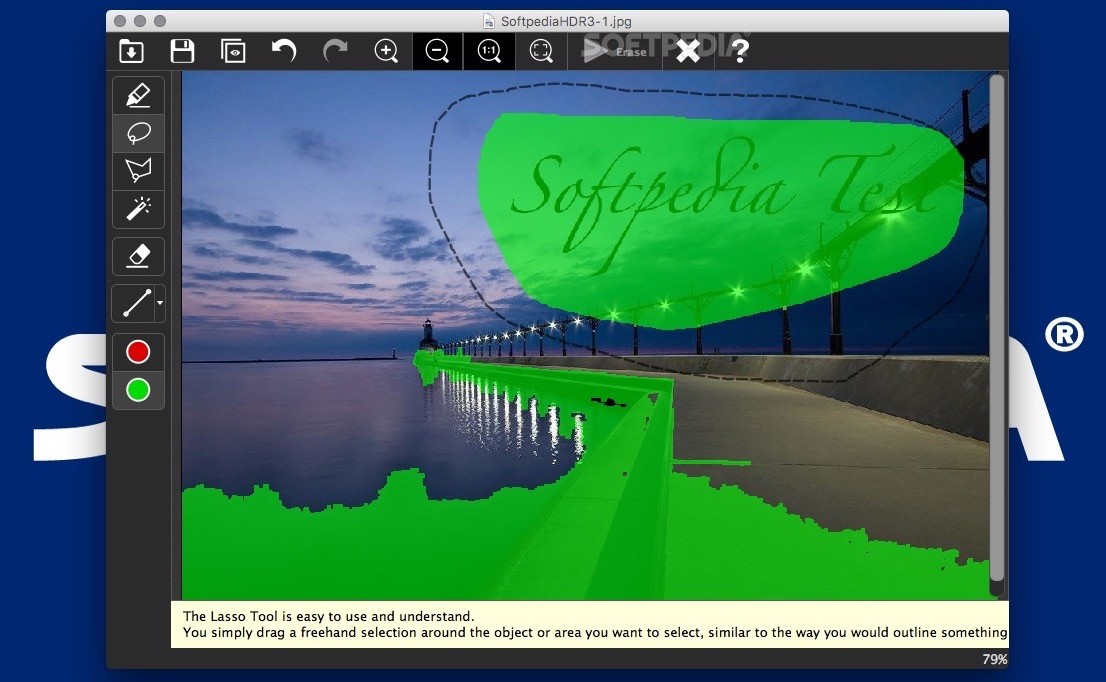

Num_inference_steps= 20, # steps between 15 and 30 work well for us Generator = torch.Generator(device= "cuda").manual_seed( 0) Prompt = "a tiger sitting on a park bench" Without this, you will not be able to edit mask. you can see the actual masked image on the panel, instead of a mysterious internal copying). Image = load_image(img_url).resize(( 1024, 1024)) edit mask/explicit copy, because gradio Image component cannot accept image+mask as an output, which is the required way of explicitly copying a masked image to img2img inpaint/inpaint sketch/ControlNet (i.e. Pipe = om_pretrained( "diffusers/stable-diffusion-xl-1.0-inpainting-0.1", torch_dtype=torch.float16, variant= "fp16").to( "cuda") During training, we generate synthetic masks and, in 25% mask everything.įrom diffusers import AutoPipelineForInpainting For inpainting, the UNet has 5 additional input channels (4 for the encoded masked-image and 1 for the mask itself) whose weights were zero-initialized after restoring the non-inpainting checkpoint. The model is trained for 40k steps at resolution 1024x1024 and 5% dropping of the text-conditioning to improve classifier-free classifier-free guidance sampling. The SD-XL Inpainting 0.1 was initialized with the stable-diffusion-xl-base-1.0 weights. RePaint outperforms state-of-the-art Autoregressive, and GAN approaches for at least five out of six mask distributions.SD-XL Inpainting 0.1 is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input, with the extra capability of inpainting the pictures by using a mask. We validate our method for both faces and general-purpose image inpainting using standard and extreme masks. Since this technique does not modify or condition the original DDPM network itself, the model produces high-quality and diverse output images for any inpainting form. A free and open-source inpainting & image-upscaling tool powered by webgpu and wasm on the browser. To condition the generation process, we only alter the reverse diffusion iterations by sampling the unmasked regions using the given image information. We employ a pretrained unconditional DDPM as the generative prior. In this work, we propose RePaint: A Denoising Diffusion Probabilistic Model (DDPM) based inpainting approach that is applicable to even extreme masks. Furthermore, training with pixel-wise and perceptual losses often leads to simple textural extensions towards the missing areas instead of semantically meaningful generation. Most existing approaches train for a certain distribution of masks, which limits their generalization capabilities to unseen mask types. Download a PDF of the paper titled RePaint: Inpainting using Denoising Diffusion Probabilistic Models, by Andreas Lugmayr and 5 other authors Download PDF Abstract:Free-form inpainting is the task of adding new content to an image in the regions specified by an arbitrary binary mask.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed